POWERFUL SOFTWARE BASED ON SUPERVISED CLASSIFICATION TECHNOLOGY TO EFFICIENTLY CLASSIFY OBJECTS OF INTEREST

Classifying Objects in Images

The Aphelion™ Imaging Software Suite includes four powerful tools for developing advanced classification applications for automatically identifying the type or class of objects found in a set of images. The four tools are Classifier Builder (the classification application front-end) and three classifier extensions: Fuzzy Logic, Neural Network Toolkit, and Random Forest, with the last two being an optional Aphelion extension product.

Classifier Builder

Classifier Builder, a tool included with the Aphelion Dev product, serves as a front-end for a classification application. It is used to define training data and to configure a classifier tool through a supervised learning process performed on a training dataset. With ClassifierBuilder, the user can easily and seamlessly perform a manual classification that assigns objects of interest to user-defined classes. This is especially useful for creating a training dataset. The ClassifierBuilder modifies ObjectSets in the sense it is adding a new attribute to each object that will contain the object’s class.

ClassifierBuilder includes capabilities to:

- Load ObjectSets in a classification project

- Define object classes

- Select object measurements to compute

- Select attributes to use during the training process

- Compute classifier parameters automatically

- Perform a manual classification

The Classifier Builder is provided with the Fuzzy Logic classifier extension (Fuzzy Logic) to enable the user to quickly perform object classification based on fuzzy logic. As an option, the Neural Network classifier can be used for more advanced classification tasks (Neural Network Toolkit).

Fuzzy Logic

The Fuzzy Logic is a supervised classification that assigns an object to a class based on the numeric values of the object’s attributes. The user interacts with Classifier Builder to define the lower and upper bounds for all user-selected attributes, for each class. In addition to attribute bound values, the user can alter the fuzziness shape type (e.g., pass-band, cut-band, low-pass, high-pass), the fuzziness width, and the attribute weight. This classifier assigns each object to the class for which the classifier computes the highest score. The settings of the Fuzzy Logic classifier can be saved and then loaded into Aphelion Dev to be automatically applied to any Aphelion ObjectSet.

Neural Network Toolkit (Optional)

The Neural Network Toolkit for Classifier Builder is an Aphelion extension that is not included in Aphelion Dev. It enhances Dev with a powerful capability to automatically classify complex objects of interest based on a supervised classification of objects into categories or classes. The Neural Network Toolkit frees the user from the necessity of specifying complex rules for object classification, such as are required by probability and information based classifiers.

The Neural Network Toolkit was developed in partnership with the University of Caen (Normandy, France). It is based on MONNA, a software application originally developed by Dr. Olivier Lezoray, PhD, University of Caen1.

Random Forest Extension (Optional)

The Random Forest Extension for Classifier Builder is an Aphelion extension that is not included in Aphelion Dev. It enhances Dev with a powerful capability to automatically classify complex objects of interest based on a supervised classification of objects into categories or classes. The Extension frees the user from the necessity of specifying complex rules for object classification, such as are required by probability and information based classifiers.

The Random Forest Extension is based upon the R software.

Training Database Generation

The first step in creating a classification application is to build a training database using ClassifierBuilder. This includes specifying the list of object classes. The list of defined classes is saved as an XML file in a ClassifierBuilder Project. Next, a representative ObjectSet is chosen and its objects are manually assigned to object classes.

Classifier Settings

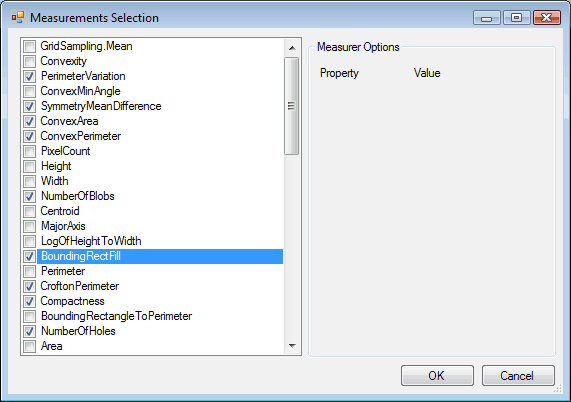

Classifier Builder - Measurement selection

The second step of the classification process is to select the classifier to use and specify the classifier settings (i.e., define user parameters including settings used during the classification process).

This step includes the user choosing object attribute measurements from the list provided in Aphelion Dev. These include shape attributes (e.g., area, perimeter, Feret diameters), texture attributes (e.g., Haralick parameters), and statistical attributes (e.g., pixel mean, minimum, maximum). The list of selected measurements is saved in the ClassifierBuilder Project.

The class attribute and all computed attributes are added to each loaded ObjectSet to form new ObjectSets, which can be saved and later imported into Aphelion Dev for further analysis.

Neural Network Settings

By default, the Neural Network classifier is comprised of multiple, single-layer neural network classifiers according to the MONNA1architecture. Combining multiple, single-layer networks gives better results than just using one, single-layer network.

A wizard is available to enable the user to quickly select the attributes to be used in the classification process and to define the number of neurons used to discriminate between two classes.

The number of parameters needed to define the classifier was intentionally reduced to simplify the definition process. However, the Neural Network Toolkit also enables developers to use as many layers and networks as needed, and to define such advanced parameters when working outside ClassifierBuilder.

The third step trains the classifier by computing a weight for each neuron. These weights will minimize the errors resulting from the Neural Network classifier’s assignment of classes to the training database objects. The output data generated by the Neural Network classifier during the training step can be displayed in a text window or as a chart. The user can check the convergence of the classifying process by reviewing the output data. Once the classifier is fully defined, it can be saved in a ClassifierBuilder Project and then applied to an ObjectSet using ClassifierBuilder.

Classification Results

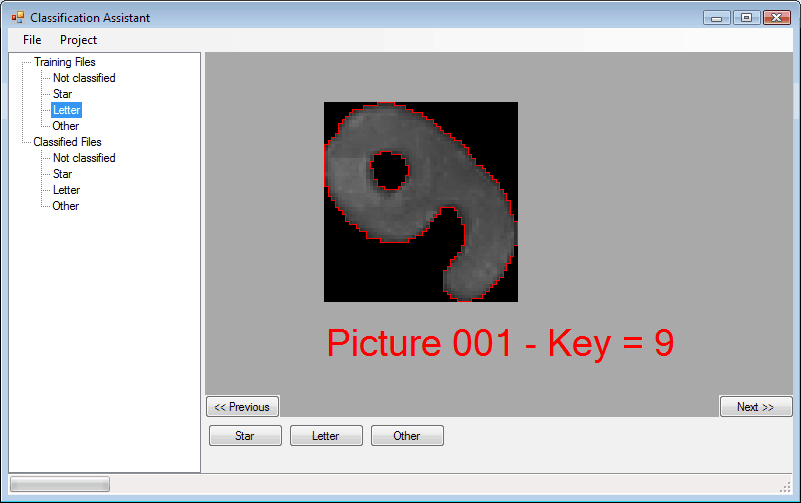

Classifier Builder - Classification assistant

The classification results can be reviewed by browsing all of the object pictures and their assigned classes. In the screen capture beside the text « Picture XXX – Key = Y » connects the picture with index « XXX » to its corresponding object « Y » in the ObjectSet.

After it is configured, a classifier can be executed in the Aphelion Dev environment in order to automatically apply the classifier to new ObjectSets.

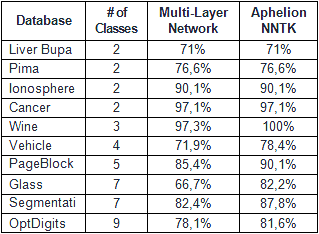

Neural Network Classifier Comparison

One of the most important benefits of the Aphelion Neural Network classifier is that it is more efficient than a standard multi-layer neural network in that it has less complexity, a shorter training cycle (partial training is possible), and better accuracy when more than two classes are defined.

Classification accuracy comparison between multi-layer neural networks and Aphelion Neural Network classifier

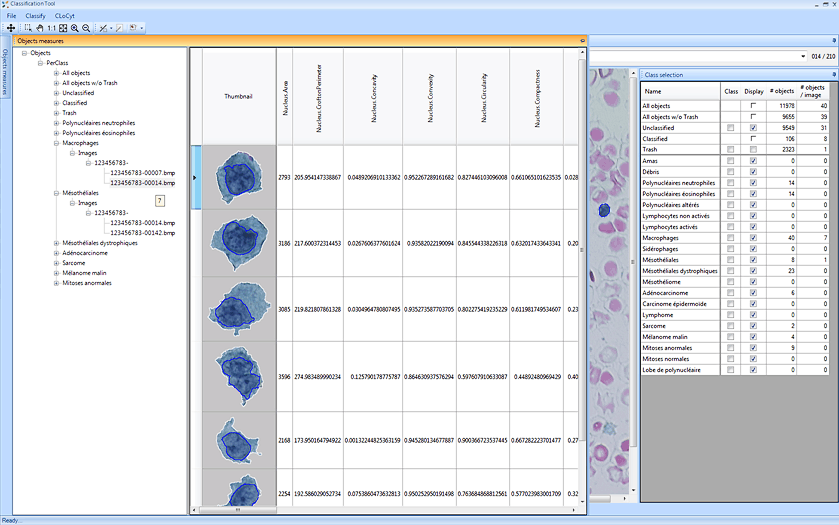

Application fields

The Aphelion Neural Network classifier has been successfully used in the fields of biology, cytology (e.g., cell analysis), agriculture, quality control, optical character recognition (e.g., license plate analysis), remote sensing, and more. Its versatility makes it well-suited for any image domain requiring advanced classification tools.

Supported Operating Systems

Windows® XP, Vista, 7, 8, 8.1, and 10 (32-bit and 64-bit versions).

Main benefits of the Aphelion Classification tools

- Easy to use graphical interface designed specifically for classification applications

- Powerful tools for advanced image understanding using Aphelion Dev ObjectSets

- Optimized classification based on the MONNA architecture

- Capable of high-level classification for a broad range of applications

- Effective for applications where number of classes requires a large set of attributes

1 For further information, refer to:

O. Lezoray, H. Cardot, A Neural Network Architecture for Data Classification, International Journal of Neural Systems, Vol. 11, no 1, pp. 33-42, 2001.